Database Refresh (RDB) is a procedure for transferring a database, which includes transactions and financial reporting data, from a source environment to a target environment. Typically, the source environment is the PROD environment, and the target environment is one of the sandbox UAT environments, such as UAT01. This process will overwrite data in target environment.

In this post, I will outline a To-Do list that can be followed before and after the RDB process. The aim of this post is to assist D365FO users in implementing a strategy for the RDB process and reducing the risk of potential data loss, particularly related to setup and configuration, in the target environment following the RDB process.

Check list:

- The source environment and the target environment must be in the same product version.

- The RDB process deletes data from the target environment (i.e. data is overwritten with the source environment’s data).

- If necessary, it may be considered to export a database of the target environment before RDB (bacpac in the Asset Library).

- Inform users about RDB start time well in advance, so if needed, they have time to take a backup of any data that is only available in the target environment (e.g. electronic reporting Config and custom roles).

- Inform the CE team with RDB for the target environment in good time and possibly do the same RDB on the CE target environment as well.

- Pay attention to CE integrations (e.g. In a case that you have Dual Write setup, this link should be removed before RDB)

- Appoint a time for RDB and post a downtime message in the target environment via LCS (i.e. Broadcast a new message for downtime).

- Initiate the RDB process at off-peak hours when there is least activity according to transactions and running batch jobs in the source environment. It is recommended to define an active period for all recurring batch jobs, including BYOD jobs for DMF, and then you can start the RDB process after the active period.

- Limit RDB to one time from the source environment.

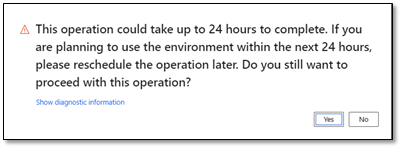

- The RDB process will take time according to amount of data need to be copied.

- When data is copied from the source environment to the target environment, the target environment (e.g. UAT01) will be down while the source environment is up. It is recommended that the source environment not be used by during this process.

- The target environment should be up again when the process is complete. If your email is existed on the “Notification list” for the target environment, you will receive an auto-email regarding the status of the environment.

- No files will be copied from source Azure blob storage.

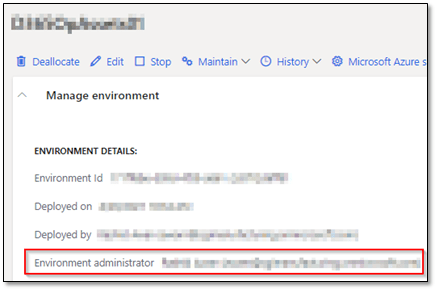

- After the RDB process, only the “Environment administrator” in the target environment should be an active user in the environment.

- If needed, just in time access to target environment for access Management Studio to truncate or update required tables after RDB.

To-Do (before RDB):

There are some tasks that can be done before the RDB process. You need to take a backup the following tables in the target environment. It can be done either by exporting data to Excel via Excel add-in or data management or using Management Studio.

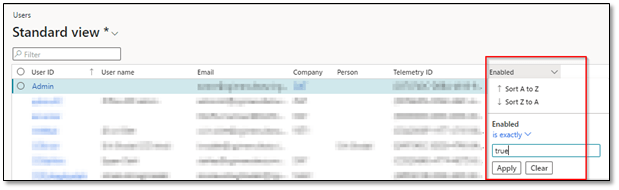

· Active users

(System administration > Users > Users): Take an export of active users in the target environment to Excel (Enabled = true).

Note: Some users only exist in the target environment!

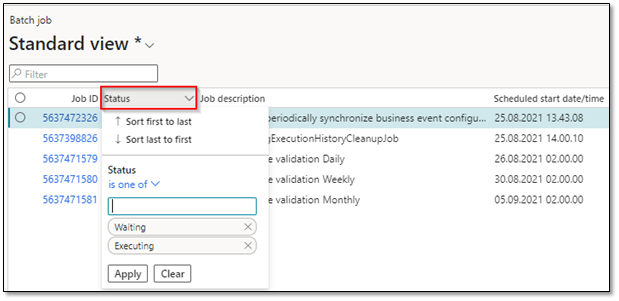

· Active batch jobs

(System administration > Inquiries > Batch jobs): Export active batch jobs in the target environment to Excel (Status is one of = Waiting and Executing).

· Specific setup

There can be specific setups that users have created only in target environment. The following is a list of tables that can be reviewed on a backup:

Note: If needed, you can evaluate to export target environment database to LCS before RDB as a backup.

–Custom roles

(System administration > Security > Security configuration): It can be exported manually or via data management).

–Custom electronic reporting configurations

(Workspaces > Electronic reporting > Reporting configuration – It can be downloaded by selecting the config and press Exchange > Export as XML File).

Note: Remember to export data models and model mappings associated with this custom ER config.

–Module setup

Setups that are only available in the target environment for workshops that will eventually be reused in Prod (e.g. setup for Asset Leasing module). It can be exported via data management.

· Microsoft Entra ID applications (Azure Active Directory applications)

(System administration > Setup > Microsoft Entra ID applications (Azure Active Directory applications)): export “Client Id”, “Name” and “User ID”.

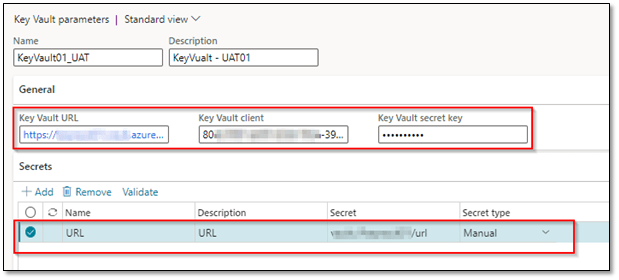

· Key Vault parameters

(System administration > Setup > Key Vault parameters): export credential and all secrets.

Note: Remember to open this form in DAT Company.

Note: In order to find “Key Vault secret key”, you need to have sysadmin role and you can get this value on Options > Record info > Show all fields).

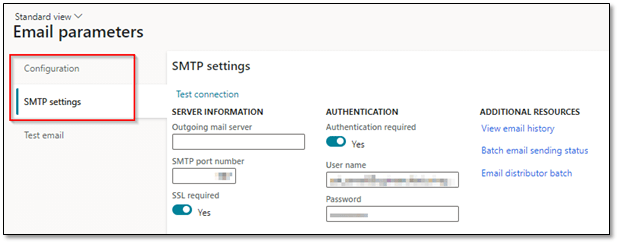

· Email parameters

(System administration > Setup > Email > Email parameters): export SMFTP setting (e.g. user name and password) and configuration.

Note: If needed, you can evaluate to export “System email templates” (System administration > Setup > Email > System email template).

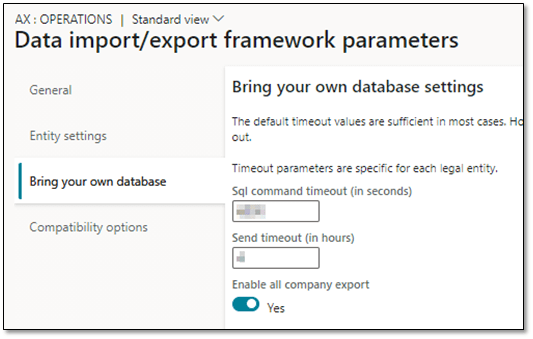

· Data management

o Framework parameters

(Workspaces > Data management > Framework parameters): If there is a specific parameter on target environment, you can try to export them before RDB.

- Entity settings > General

- Entity settings > Configure entity execution parameters

- Bring your own database > Sql command timeout / Send timeout

o Entity store parameters

(Workspaces > Data management > Configure entity export to database): You can take backup for ‘Source name’, ‘Description’, ‘Type’ and ‘Connection string’.

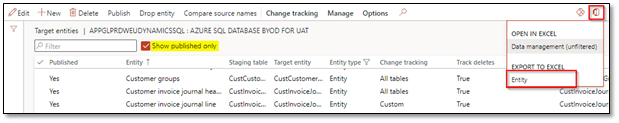

o Published entities for Azure SQL Database in the target environment

(Workspaces > Data management > Configure entity export to database> Publish): You can export published entities to Excel (Show published only = Yes).

Note: ‘Change tracking’ for published entities can be found in this list.

· ISV specific parameters for the target environment

Some ISVs have their specific setup on target environment that deviate from the source environment. For example, ISV for AP (i.e. Accounts Payable) has its own setup for test web solution (i.e. URL, username, password and s/ftp setup for importing incoming invoices in D365FO).

Some of those tables are global/shared but some are company-specific which require us to export data for all the companies. When it comes to company-specific setup, it can be a good idea to check if you can excel add-in to export data for several companies or run a script on the target environment via SQL to take a backup.

To-Do (after RDB):

When the RDB process is completed, only the ‘Environment administrator’ for the target environment will be the active user in the solution. For the first starting point, the ‘Environment administrator’ can enable users who need to work on updating the target environment data after RDB.

These specific users should first start using tasks that already mentioned on To-Do (before RDB) to update the tables (i.e. remove source environment data and replace it with target environment data for those tables). For updating the tables, we can use script or use data management or Excel add-in, while some tasks can be done manually (e.g. importing custom electronic reporting config).

You can use the following order:

- Create missing users.

- Delete and create the correct value (s) for the Azure Active Directory application.

- Delete and create the correct value (s) for Key Vault parameters (i.e. in DAT Company).

- Delete and create the correct value (s) for Email parameters.

- Delete and create the correct value (s) for ISV specific setup on target environment (i.e. It can be done either via running script or using Excel add-in).

- Create the correct entity store, refresh entity list and publish necessary entities against it (i.e. You can either try to delete the entity store from source environment via SQL and create the specific one for target environment, or add a new entity store for target environment or reuse the entity store by changing the connection string and then publish the required entities).

- Import custom electronic reporting config.

- Import custom security roles.

- Enable required users.

- Run appropriate batch jobs.

- If you use dual write, the integration needs to be configured again, and mapping needs to be imported.

- If there is a service bus integration, queues needs to be checked for messages and if needed, get purged.

I hope this post has provided valuable insights on creating a process for database refresh in D365FO to prevent potential issues related to production references or data loss caused by overwriting data from the source to the target environment. If you have any further questions or feedback, please don’t hesitate to use the ‘Leave a Reply’ feature. Your thoughts and inquiries are greatly appreciated!

Useful links:

https://docs.microsoft.com/nb-no/dynamics365/fin-ops-core/dev-itpro/database/database-refresh

2 Comments Add yours